How We Built Model Forge: Autonomous Training Infrastructure for Domain-Specific LLMs

We've been training domain-specific models for European labour relations since the early days of the platform. General-purpose LLMs can handle the basics, but when precision across dozens of jurisdictions matters — correct citations, country-specific obligations, structured analysis teams can rely on — they fall short of what our users need. Model Forge is the latest iteration of the infrastructure we use to close that gap.

European labour relations is one of those domains where close enough isn't good enough. When a proposed AI monitoring system triggers §87(1) No. 6 of the Betriebsverfassungsgesetz, the platform needs to surface that correctly. When a restructuring may invoke L.1233-3 consultation obligations in France, HR teams need the right reference points — not hallucinated citations or analysis grounded in the wrong country's framework.

General-purpose models can get you surprisingly far with prompt engineering, but they hit a ceiling. They confuse Austrian ArbVG provisions with German BetrVG ones. They hallucinate article numbers. They give confident answers grounded in the wrong country's legal framework. For a platform that multinational companies rely on for works council compliance, that failure mode is unacceptable. We learned this early, which is why we've been fine-tuning our own models from the start.

Model Forge is the current generation of that infrastructure — our internal system for training, evaluating, and continuously improving domain-specific language models. This article covers the three ideas that make it work.

Why We Train Smaller Models (On Purpose)

There's a prevailing assumption in the industry that bigger is always better — that you should reach for the largest model you can afford and throw cloud GPU hours at it until the loss curve flattens. We think that's wrong for domain-specific applications, and our experience building labour relations models has reinforced that.

A 700B parameter general-purpose model knows a little about everything. A 20B model fine-tuned on thousands of jurisdiction-specific labour law scenarios knows a lot about the thing that actually matters to our users. In head-to-head evaluations on our benchmark — 132 samples across 7 task types covering dozens of European jurisdictions — our fine-tuned smaller models consistently outperform general-purpose models many times their size on the metrics that count: correct legal citations, jurisdiction-appropriate analysis, and structured output that lawyers and works council members can actually use.

Smaller models also have practical advantages that compound over time. They're cheaper to serve, faster at inference, easier to iterate on, and deployable on infrastructure you control. When you're running a compliance platform where data sovereignty matters, being able to serve a model from your own hardware rather than routing sensitive employee relations data through a third-party API isn't a nice-to-have — it's a requirement for many of our enterprise customers.

This philosophy shaped our hardware choices. Rather than renting A100 or H100 clusters by the hour — where costs scale linearly with experimentation and you're incentivised to stop iterating once the bill gets uncomfortable — we invested in NVIDIA DGX Spark infrastructure with GB10 Grace-Blackwell GPUs and 128GB of unified memory per node. It sits on our own network. We can run experiments at 3am without watching a billing dashboard. We can iterate 50 times on a hyperparameter search without calculating whether each run is "worth it." The economics of owned hardware fundamentally change how aggressively you can optimise.

The tradeoff is that 128GB of unified memory requires discipline. You can't brute-force your way past memory limits by spinning up more nodes. Every architectural decision — quantisation strategy, LoRA rank, sequence length, batch size — has to be made with the memory envelope in mind. That constraint turned out to be a feature, not a bug. It forced us to build smarter infrastructure.

Autoresearch: A Self-Directing Hyperparameter Search

Anyone who's fine-tuned a large model knows the drill. You pick some hyperparameters based on a paper you read, run training for a few hours, check the metrics, adjust, repeat. It's slow, manual, and biased toward whatever you tried last time. Most teams end up with hyperparameters that are "good enough" rather than actually optimal, because the search space is vast and every experiment costs real compute — or real money, if you're paying by the hour on cloud GPUs.

Because we own our hardware, we can afford to be systematic about this. Inspired by Andrej Karpathy's autoresearch concept, we built a system that runs short training experiments, evaluates each one against a held-out benchmark, and uses the results to decide what to try next. The key insight is that the search isn't random — it follows a three-phase strategy that balances exploration with exploitation.

Phase 1 — Explore (35% of budget): Cast a wide net. Sample configurations randomly from the full search space to establish a landscape of what works and what doesn't.

Phase 2 — Exploit (40% of budget): Take the best configuration found so far and perturb it one parameter at a time. This is essentially a coordinate descent in hyperparameter space — change the learning rate while holding everything else fixed, then change the LoRA rank, and so on.

Phase 3 — Refine (25% of budget): Zoom in. For the best numeric parameters, try values between the discrete grid points. If 2e-4 was the best learning rate and 1e-4 was the next-best, try 1.5e-4.

class SearchStrategy:

"""Three-phase search: explore -> exploit -> refine."""

def __init__(self, search_space, default_config, total_iterations, seed=42):

self.space = search_space

self.best_config = copy.deepcopy(default_config)

self.best_score = -1.0

self.rng = random.Random(seed)

# Phase boundaries

self.explore_end = max(1, int(total_iterations * 0.35))

self.exploit_end = max(self.explore_end + 1, int(total_iterations * 0.75))

# Shuffle parameter order for exploit phase

self._exploit_params = list(self.space.keys())

self.rng.shuffle(self._exploit_params)

self._exploit_idx = 0

def propose(self, iteration):

phase = self.phase(iteration)

if phase == "explore":

return self._propose_explore()

elif phase == "exploit":

return self._propose_exploit()

else:

return self._propose_refine()

def _propose_refine(self):

"""Interpolate between known-good values for numeric params."""

config = copy.deepcopy(self.best_config)

numeric_params = [

p for p in self.space

if isinstance(self.space[p][0], (int, float))

and p != "max_seq_length"

]

param = self.rng.choice(numeric_params)

old_val = config[param]

values = sorted(self.space[param])

idx = closest_index(values, old_val)

neighbours = []

if idx > 0:

neighbours.append(values[idx - 1])

if idx < len(values) - 1:

neighbours.append(values[idx + 1])

# Interpolate between grid points for float params

if isinstance(old_val, float):

if idx > 0:

neighbours.append((values[idx - 1] + old_val) / 2)

if idx < len(values) - 1:

neighbours.append((old_val + values[idx + 1]) / 2)

config[param] = self.rng.choice(neighbours)

return self._apply_oom_guards(config)What makes this more than just another search algorithm is the refinement phase. Most hyperparameter searches are limited to a predefined grid. Ours generates new candidate values on the fly by interpolating between the best-known points. When the difference between a learning rate of 1e-4 and 2e-4 matters — and in LoRA fine-tuning, it absolutely does — being able to try 1.5e-4 without pre-defining it gives us meaningfully better results.

Hardware-Aware Search Constraints

The second piece that makes autoresearch practical is the OOM guard system. When you're running an autonomous search on fixed hardware, a single out-of-memory crash doesn't just waste one experiment — it corrupts GPU state, forces a full model reload, and can stall the entire search for minutes. The naive solution is to be conservative and avoid the edges of what the hardware can do. We wanted to explore those edges safely.

Instead of catching OOM errors reactively, we prevent dangerous configurations before they execute:

OOM_GUARDS = [

(lambda c: c["lora_r"] >= 128 and c["max_seq_length"] >= 8192,

"LoRA r>=128 with seq_length>=8192 risks OOM"),

(lambda c: c["per_device_train_batch_size"] >= 4 and c["max_seq_length"] >= 8192,

"batch_size>=4 with seq_length>=8192 risks OOM"),

(lambda c: "lm_head" in c.get("target_modules", []) and c["lora_r"] >= 64,

"lm_head target with r>=64 risks OOM"),

]

def _apply_oom_guards(self, config):

"""Downgrade values that would risk OOM."""

for guard_fn, _desc in OOM_GUARDS:

if guard_fn(config):

if config.get("max_seq_length", 0) >= 8192:

config["max_seq_length"] = 4096

if config.get("lora_r", 0) >= 128:

config["lora_r"] = 64

if config.get("per_device_train_batch_size", 0) >= 4:

config["per_device_train_batch_size"] = 2

if "lm_head" in config.get("target_modules", []):

config["target_modules"] = [

m for m in config["target_modules"] if m != "lm_head"

]

return configThese guards encode hard-won knowledge about what combinations of parameters actually fit in our memory envelope. Rather than a simple parameter cap, they model the interactions between parameters — LoRA rank 128 is fine with sequence length 4096, and sequence length 8192 is fine with LoRA rank 64, but the combination of both will crash. The system automatically downgrades the more impactful parameter, keeping the search productive rather than wasting iterations on configurations that can never succeed.

This is the kind of infrastructure that only makes sense when you own your hardware and know its exact capabilities. Cloud GPU instances abstract away the memory model, which is convenient right up until your autonomous search burns through your budget crashing on configurations that were never going to work.

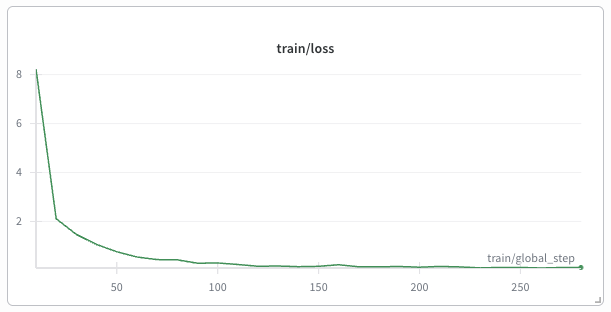

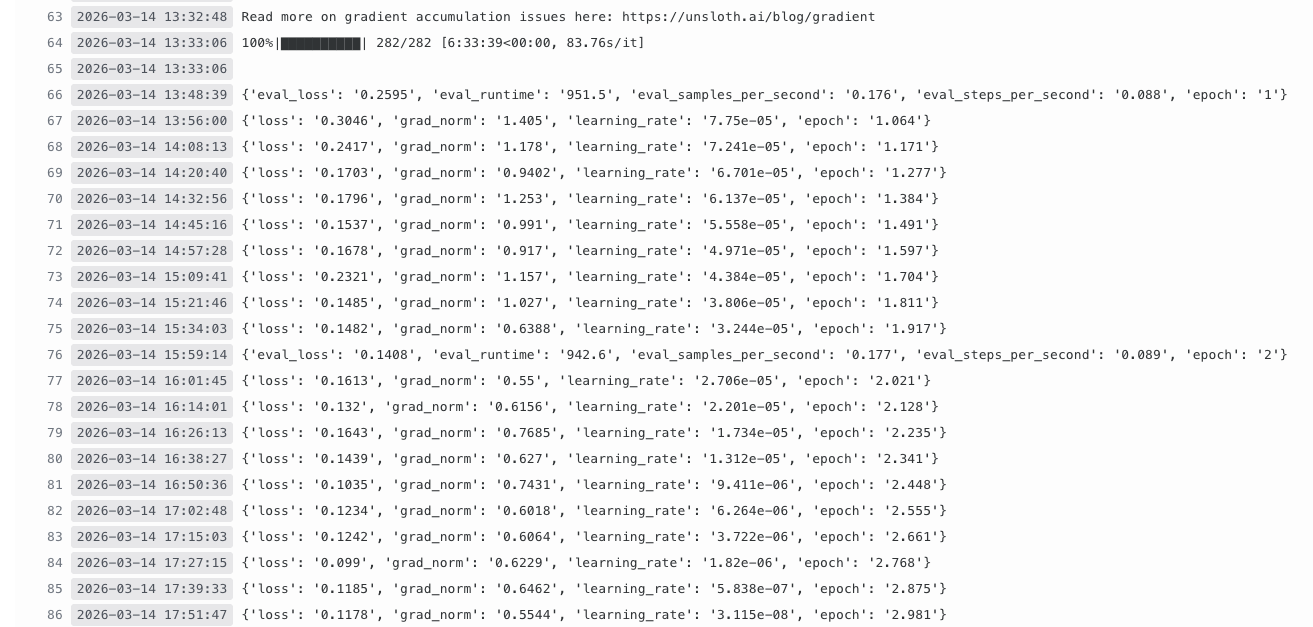

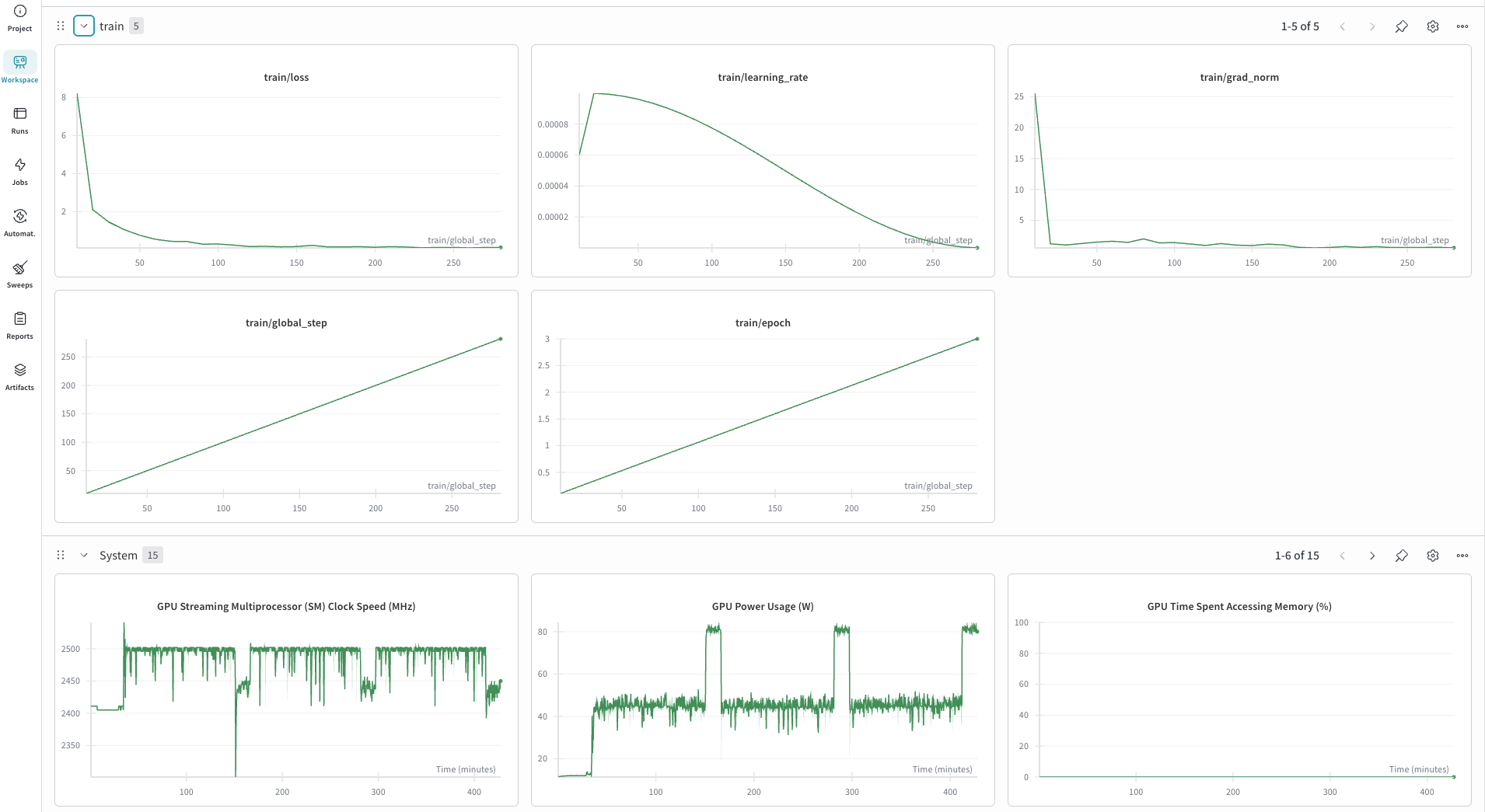

Each experiment trains for 50 steps (roughly two minutes), evaluates on 20 held-out samples, and records the full result — including the configuration, ROUGE scores, training loss, status, and duration. Every run is logged to Weights & Biases, giving us full visibility into what the search is doing without watching it live. When the search completes, the best configuration is evaluated on the full 132-sample benchmark. The entire system is resumable: you can stop it, review the results, and restart from exactly where it left off.

Why This Matters

Autoresearch isn't just about finding better hyperparameters. It's about removing the human bottleneck from the optimisation loop. A researcher can reasonably test 3–5 configurations in a day. Autoresearch runs 20 experiments in an hour, with each experiment informed by every previous one. The three-phase structure means it doesn't just randomly stumble into good configurations — it systematically narrows down on them. And because each run costs us nothing beyond electricity, we can re-run the entire search every time we update our training data.

Knowledge Distillation: Compressing Expertise Into Deployable Models

Fine-tuning on the largest model you have access to gives you the best training signal. But the model you train on doesn't have to be the model you deploy. We use a two-stage distillation pipeline that separates training quality from inference efficiency.

Our fine-tuned 120B teacher — trained with the best configuration autoresearch found — produces excellent responses. But serving a 120B model in production, even quantised, requires dedicated hardware per instance. For a platform that needs to handle concurrent requests across multiple customers, the economics don't work.

The distillation pipeline compresses the teacher's knowledge into a 20B student:

┌─────────────────────────────────────────────────────────────────────┐

│ DISTILLATION PIPELINE │

│ │

│ ┌──────────────┐ ┌──────────────────────┐ ┌──────────────┐ │

│ │ Training │───▶│ 120B Teacher (4-bit) │───▶│ Teacher │ │

│ │ Prompts │ │ + LoRA adapter │ │ Responses │ │

│ │ (JSONL) │ │ (inference mode) │ │ (JSONL) │ │

│ └──────────────┘ └──────────────────────┘ └──────┬───────┘ │

│ │ │

│ ▼ │

│ ┌──────────────────────┐ ┌──────────────┐ │

│ │ 20B Student │◄───│ Student │ │

│ │ + LoRA (r=32) │ │ Training │ │

│ │ (deployable) │ │ (SFT) │ │

│ └──────────────────────┘ └──────────────┘ │

└─────────────────────────────────────────────────────────────────────┘Step 1 loads the fine-tuned 120B model with its LoRA adapter in 4-bit quantisation and generates responses for every training prompt. These responses carry the teacher's domain knowledge — the correct legal citations, the jurisdiction-specific analysis, the structured output format — encoded as text rather than as model weights. The generation runs with temperature 0.7 and top-p 0.9 to produce diverse but high-quality outputs.

Step 2 trains the 20B student on these teacher-generated responses using supervised fine-tuning with a smaller LoRA configuration (rank 32 vs. the teacher's rank 64). The student learns to mimic the teacher's reasoning patterns without needing to discover them from scratch.

Both steps run on a single DGX Spark node. The 120B teacher in 4-bit quantisation consumes roughly 60GB, leaving headroom for inference buffers. After generation completes, the teacher is fully unloaded — including flushing system memory caches — before the student loads. Running the entire distillation on a single box that we own means we can iterate on this process as often as we need to, without provisioning cloud instances or managing data transfer.

The result is a 20B model that retains the bulk of the teacher's domain-specific capability at a fraction of the serving cost — deployable through Ollama on hardware that any mid-size company can afford. This is the model our platform actually serves to users. The 120B teacher exists solely to make the 20B student better.

Training Data That Encodes Legal Expertise

The third differentiator is how we build training data. Most fine-tuning datasets are either scraped from the internet or generated by prompting a foundation model with generic instructions. Neither approach works when your model needs to cite specific articles of the German Betriebsverfassungsgesetz or the French Code du Travail with the precision that a works council advisor expects.

Our synthetic data generation pipeline encodes actual legal frameworks as structured knowledge:

┌─────────────────────────────────────────────────────────────────────┐

│ SYNTHETIC DATA GENERATION │

│ │

│ ┌──────────────────┐ ┌───────────────────┐ ┌──────────────┐ │

│ │ 15 Legal │ │ 12 Company │ │ 5 Change │ │

│ │ Frameworks │ │ Archetypes │ │ Types │ │

│ │ (with articles, │ x │ (with countries, │ x │ (AI, reorg, │ │

│ │ triggers, │ │ industries, │ │ outsource, │ │

│ │ obligations) │ │ employee counts) │ │ policy...) │ │

│ └────────┬─────────┘ └────────┬──────────┘ └──────┬───────┘ │

│ │ │ │ │

│ └───────────────┬───────┘──────────────────┘ │

│ ▼ │

│ ┌─────────────────┐ │

│ │ Scenario │ │

│ │ Compositor │ │

│ │ (realistic │ │

│ │ combinations) │ │

│ └────────┬────────┘ │

│ │ │

│ ┌────────────┴────────────┐ │

│ ▼ ▼ ▼ │

│ ┌──────────┐ ┌──────────┐ ┌──────────┐ │

│ │ CBA │ │ Change │ │ Legal │ ... 7 task types │

│ │ Summary │ │ Assess. │ │ Advisory │ │

│ │ Tasks │ │ Tasks │ │ Tasks │ │

│ └──────────┘ └──────────┘ └──────────┘ │

└─────────────────────────────────────────────────────────────────────┘Each country's legal framework is represented as a structured object containing the governing legislation, the relevant employee representation body, specific triggering provisions (with article numbers), obligation levels (information, consultation, or co-determination), escalation paths, and data protection requirements. When the generator creates a scenario — say, a German automotive company deploying an AI performance monitoring system — it doesn't guess at the relevant law. It pulls from the framework:

- §87(1) No. 6 BetrVG triggers co-determination because the system monitors employee behaviour

- §90 BetrVG triggers information rights because it changes work processes

- The Betriebsrat must be consulted, and failure to do so can be escalated to the Einigungsstelle

This isn't a prompt template with blanks to fill in. It's a combinatorial engine that produces thousands of realistic, legally grounded scenarios across dozens of jurisdictions, 12 company archetypes, and 7 task types. The resulting training data teaches the model not just what labour law says, but how it applies in specific, realistic situations — which is ultimately what our users need to know.

The Full Loop

These three systems work together as a closed pipeline. Domain-encoded synthetic data feeds the training process. Autoresearch optimises the training configuration autonomously. The best configuration produces a fine-tuned teacher, which is distilled into a deployable student model.

But the more important thing is that this loop is repeatable. When new legislation passes — and in European labour relations, it always does — we update the legal framework definitions, regenerate training data, re-run autoresearch, and produce an updated model. The entire cycle from "new regulation published" to "updated model deployed" can happen in days, not months. Because we own the hardware and the search is autonomous, the marginal cost of retraining is effectively zero.

We think the industry's fixation on model scale has obscured a more interesting question: how do you build infrastructure that makes domain-specific models better, faster, and cheaper over time? Scale helps with general knowledge. But for the kind of precise, jurisdiction-aware, citation-accurate analysis that regulated industries demand, the answer isn't a bigger model — it's a better training loop.

Model Forge is our answer to that question. The labour relations domain was our starting point because it's where Graylark operates. But the infrastructure is domain-agnostic. Any field with structured regulatory knowledge, jurisdiction-specific rules, and a need for precision over generality could benefit from this approach.

Model Forge is the training infrastructure behind Graylark's AI platform. To learn more about how we help multinational companies navigate employee representation across Europe, visit Graylark Technologies.